soundStrider v0.3.0

Development on soundStrider has continued at a strong and steady pace since my last update. Culminating over sixty hours of development, its latest minor version includes an ambient visualization for spectators (hello Twitch streamers), a loving port of the classic Soundsearcher objects, and a plethora of minor features, fixes, and enhancements.

Continue reading for new open eye visuals and audio excerpts as we unpack what went into building soundStrider’s first playable version.

Building a spectrogram

For a game designed to be played closed eyed, providing an elegant and thoughtful graphical user experience is a secondary objective. Finding necessity in extending this experience beyond its menus was a difficult decision to make. My primary fear was that, in providing visual feedback, it would discourage players from closing their eyes.

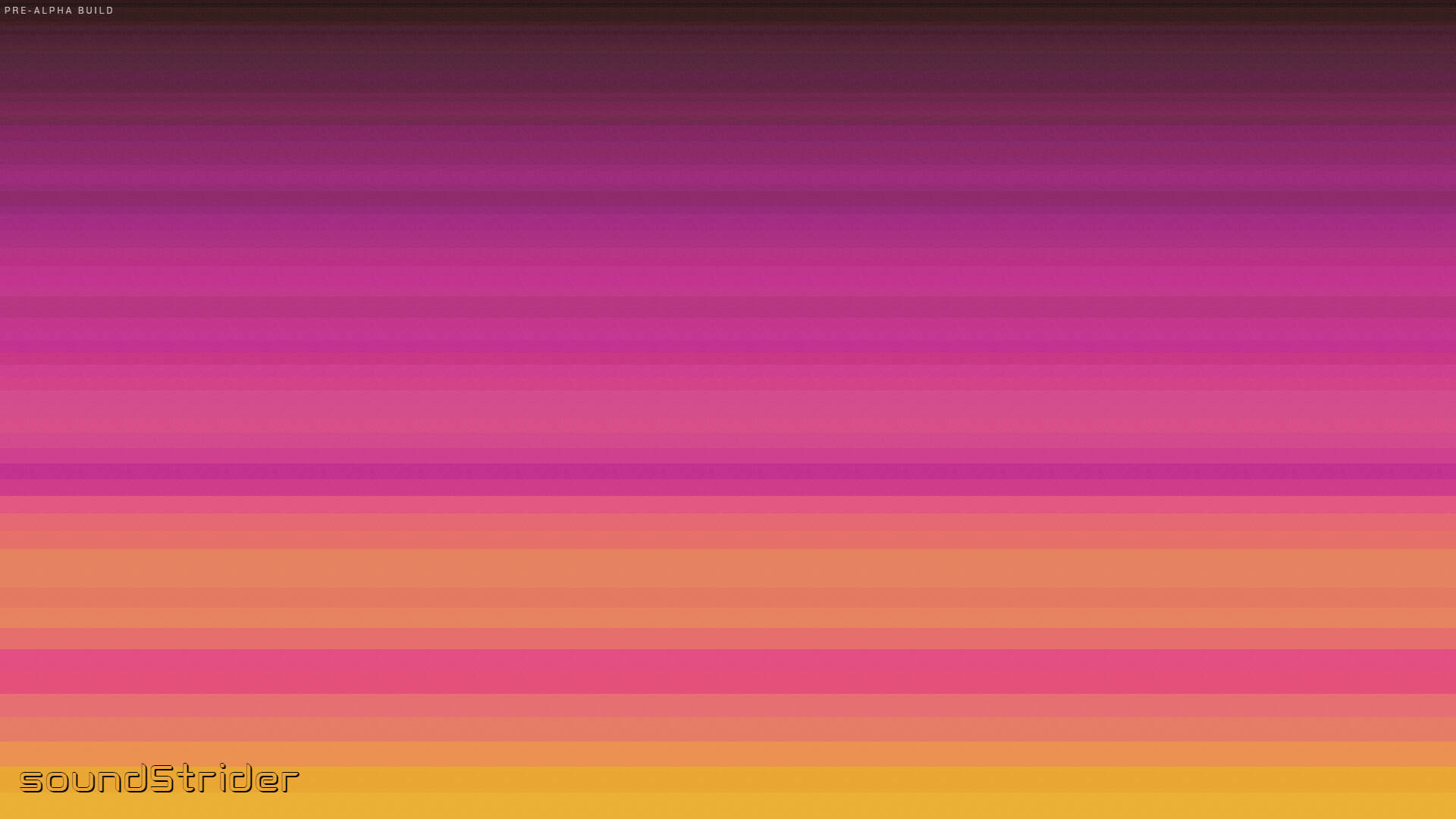

Ultimately, I chose to invest considerable effort into an artful visualization to enhance its branding and make soundStrider more photogenic. By leveraging a spectrogram and a small amount of film grain, it provides a soothing backdrop for spectators without leaking information to players:

The result evokes the sunrise and sunset reflecting off undulating waves—a liminal place I could stay for days.

Gathering frequency data

Creating a spectrogram involves analyzing the frequency content of a signal and plotting a graph of their intensities over time.

Before the advent of digital signal processing, spectrograms were typically implemented through an array of narrow bandpass filters that essentially divided a signal’s frequencies into measurable bins. Now we can easily leverage a fast Fourier transform to achieve this with digital precision.

The Web Audio API provides the AnalyserNode for this use case:

AnalyserNodeconst audioContext = new AudioContext()

const analyser = audioContext.createAnalyser()

analyser.fftSize = 512

const analyserData = new Uint8Array(

analyser.frequencyBinCount

)

// TODO: Create and connect nodes to the AnalyserNode

Calling its getByteFrequencyData method with any Uint8Array will fill it with integers between 0 and 255 that map to the intensities of each frequency bin.

We’ll use that later to determine the colors for each bin.

Dividing screen space

Spectrograms commonly represent frequency and time as spatial dimensions and leverage color for intensity. For the unique aesthetic of soundStrider, I chose to represent the time dimension through overpainting, allowing its colors to fade and blend organically over time. With only one spatial dimension, the spectrogram would need to stretch to fill any 2D space.

At first I simply divided the screen vertically into equal parts; however, I wanted to emphasize the musical relationships between frequencies, so my next step was mapping linear space to logarithmic space so the bins would grow progressively larger in height from the bottom of the screen:

function getBinY(index, count, canvasHeight) {

const value = (count - index - 1) * canvasHeight / count

return Math.floor(

logInterpolate(1, canvasHeight + 1, value + 1) - 1

)

}

function logInterpolate(min, max, value) {

const b = Math.log(max / min) / (max - min)

const a = max / Math.exp(b * max)

return a * Math.exp(b * value)

}

Three important caveats are inscribed in getBinY():

- The interpolated

valueis inverted so that bins are drawn from the bottom to the top of the screen. - The arguments for its call to

logInterpolate()are offset by1so it never divides by zero. - Its return value is rounded via

Math.round(). This forces bins to be drawn at whole-pixel intervals to avoid sub-pixel rendering issues.

A few optimizations were made for production.

Importantly, the bin heights are cached and recalculate after resize and orientationchange events rather than being calculated each frame.

The calculations were simplified by solving the compound interest formula so that heights are derived from a constant rate of change, resulting in fewer computations than interpolating for each iteration.

Mapping intensities to colors

To achieve its coloring effect, a spectrogram selects from a color gradient based on the intensity of each frequency bin. This is the perfect use case for linear interpolation and template literals:

function getBinColor(intensity) {

const color = blendColors(

[

{r: 0, g: 0, b: 0}, // black

{r: 255, g: 0, b: 0}, // red

{r: 255, g: 255, b: 0}, // yellow

{r: 0, g: 255, b: 255}, // teal

{r: 0, g: 0, b: 255}, // blue

],

intensity / 255

)

return `rgb(${color.r}, ${color.g}, ${color.b})`

}

function blendColors(colors, percent) {

// Find lower and upper color

const count = colors.length - 1

const ai = Math.floor(count * percent),

bi = Math.ceil(count * percent)

const a = colors[ai],

b = colors[bi]

// Return early if lower is upper

if (a === b) {

return {...a}

}

// Find mix between lower and upper color

const ap = ai / count,

bp = bi / count,

mix = scale(percent, ap, bp, 0, 1)

// Return interpolated color

return {

r: Math.round(lerp(a.r, b.r, mix)),

g: Math.round(lerp(a.g, b.g, mix)),

b: Math.round(lerp(a.b, b.b, mix)),

}

}

function scale(value, min, max, a, b) {

return ((b - a) * (value - min) / (max - min)) + a

}

function lerp(min, max, percent) {

return (min * (1 - percent)) + (max * percent)

}

Let’s unpack these functions:

getBinColor()returns the color that corresponds to anintensityalong a predefined gradient. It uses a template literal to concatenate its channels into anrgb()CSS color value.blendColors()accepts an array of equally spacedcolorsand returns the color atpercentalong their gradient. Roughly it: determines the colors to blend, determines their blending amount, and returns the interpolated channel values. The return values are rounded becausergb()colors must be unsigned 8-bit integers.scale()interpolates fromvaluewithin range[min, max]to a value within range[a, b].lerp()is the standard linear interpolation algorithm that returns the value atpercentin the range[min, max]. This could be rewritten as a call toscale()—or vice versa—at the cost of legibility.

Technically, the inclusion of blendColors() here is purely a helpful generalization.

Because soundStrider has two colors with a static red channel, its color mapping logic simply interpolates the green and blue channels directly within its returned template literal.

While the savings are minor, any cycle spent updating its interface is one stolen from updating its physics or audio engine.

Painting on the <canvas>

With an AnalyserNode to parse the frequency content of an input mix, functions to position frequency bins in logarithmic space, and functions to select their colors from an interpolated gradient, we are able to draw a spectrogram on a <canvas> each frame:

const canvas = document.querySelector('canvas'),

canvasContext = canvas.getContext('2d')

function draw() {

analyser.getByteFrequencyData(analyserData)

const binCount = analyserData.length,

canvasHeight = canvas.height,

canvasWidth = canvas.width

for (let i = 0, y = canvasHeight; i < binCount && y > 0; i++) {

const nextY = getBinY(i, binCount, canvasHeight)

canvasContext.fillStyle = getBinColor(analyserData[i])

canvasContext.fillRect(0, nextY, canvasWidth, y - nextY)

y = nextY

}

}

requestAnimationFrame(function loop() {

draw()

requestAnimationFrame(loop)

})

Importantly, the for loop within the draw() function initializes a second y variable to keep track of the vertical position of the last bin.

By clamping its value between canvasHeight and 0, it avoids drawing beyond the <canvas> bounds.

The rectangle for each bin is then drawn from the difference of its vertical position and the previous bin.

Finally, draw() is called continuously within a requestAnimationFrame loop to start drawing the spectrogram at a stable frame rate.

Epilepsy warning

According to Success Criterion 2.3.1 of the Web Content Accessibility Guidelines 2.1, animations must neither flash more than three times per second nor exceed its general flash and red flash thresholds

.

To conform to this success criterion, several modifications were made to improve the inclusivity of soundStrider’s spectrogram:

- Fading in. To prevent flashes when sounds begin, bin colors are attenuated by a fractional alpha value so they slowly fade in. This approach favors sustained sounds and smooths short ones.

- Fading out. To prevent flashes when sounds end, bin colors are painted over the previous frame. The alpha of each pixel is then attenuated by a constant rate so they slowly fade out.

- Dithering. A subtle film grain effect is applied to smooth the boundaries of each bin and dither their colors as they blend and fade.

Players may be safe in soundStrider, but heed this warning: use the above code at your own risk.

Porting Soundsearcher

Having finally completed the user interface for soundStrider, it was time to begin filling its scenes with props.

A major goal and litmus test for me was experiencing Soundsearcher within the new engine, so porting its sounds into a classic

palette was the most logical place to start.

Implementing classic props

Let’s take a seat and chill next to this campfire in the excerpt below:

There are key differences in approach between the two games which impacted the port, including soundStrider’s procedural generation system, psychoacoustic modeling, and reverb system. These improvements primarily affect how props route their sounds, with much of the boilerplate code like spawning and movement being delegated to their parent class. But there were other changes in design philosophy to address:

- Prefabricated synthesizers. Building common circuits from scratch is a repetitious task. Factory functions were created to instantiate AM, FM, noise, and simple synths as parameterized objects so I can focus on mindfully using them rather than mindlessly building them. Each of the classic props’ sounds were reimplemented with these synthesizers to demonstrate their flexibility.

- Deterministic movement. The strategy of procedurally generating soundStrider in chunks is tantamount to its bookmarks system and philosophy as a relaxation tool. Implementing deterministic movements within props is therefore key in their predictability from both a user and technical perspective. The movement patterns of the classic props were adjusted so they follow regular paths that remain near their chunks.

- Circadian behaviors.

The inclusion of a day-night cycle allows scenes in soundStrider to slowly change over time.

Utility functions were created to map the time of day to diurnal, nocturnal, and crepuscular behaviors.

For example, if time is a sinusoidal function bound to

[-1, 1], then the diurnal behavior maps it to[0, 1]where1is the zenith and0is any time at night. The behaviors of classic props were then adjusted so their timings favor a specific time of day.

Implementing classic footsteps

Now let’s take a stroll through the classic palette in the excerpt below:

Unlike the props in soundStrider, whose sophistication are required by its underlying systems, porting the classic footsteps was a relatively straightforward process.

The footsteps module here is similar to its predecessor in how it triggers a footstep after the player travels a specific distance. What’s new is how it assesses the current palette and a depth field to determine which footstep sounds to trigger and blend together. This allows palettes to express complex and dynamic surfaces as players pass through.

The classic footstep simply leverages a prefabricated noise synth with a buffer of pink noise. On each step it applies an envelope to its lowpass filter at a frequency consistent with the current movement speed.

Despite its simplicity, it’s only the first step of a long walk.

Future sounds

The immediate future of soundStrider is filled with a diverse list of sound design and compositional tasks.

In the previous weeks, I finalized the list of palettes and the props that populate their spaces and implemented the systems that generate and place them—a topic for a separate post. Ranging from naturalistic to abstract, from the sounds of the beach or rainforest to otherworldly or digital realms, realizing them will involve meticulous modeling and a dash of imagination.

The addition of footsteps similarly opens the door to more complex modeling of spaces and their walkable surfaces. Beyond the basic sound of a hard surface, players can expect to walk through grass, gravel, sand, and water, with a focus on believable randomness and preventing listener fatigue between steps.

Upcoming milestones

Development is still at pace with the roadmap previously described, with the first early access release due in six to eight weeks. With a complete user interface and a framework for implementing future sounds, the next minor update will focus on filling soundStrider with content and laying the groundwork for its quest system.

Ideally I will remain focused and dedicated as development enters this uncharted territory of pure creativity. I look forward to sharing more audio excerpts demonstrating its progress in my next post.